|

12/8/2022 0 Comments Eigenvectors mathematica

How is PCA different from linear regression? In other words, PCA is sensitive to variance, and thus if no standardization is done, large range variables will dominate, leading to biased results and non-optimal principal components. If you normalize your data, all variables have the same standard deviation, thus all variables have the same weight and your PCA calculates relevant axes. So a variable with a high standard deviation will have a higher weight for the calculation of the axes than a variable with a low standard deviation. And the new axes are based on the standard deviation of your variables. PCA calculates a new projection of your dataset. The parameter n_components defines the number of principal components: > import numpy as np > from composition import PCA > X = np.array(,, ,, , ]) > pca = PCA(n_components=2) > pca.fit(X) PCA(n_components=2) > print(pca.explained_variance_ratio_) > print(pca.singular_values_) Why is standard scaling required before calculating a covariance matrix? It merely takes four lines to apply the algorithm in Python with sklearn: import the classifier, create an instance, fit the data on the training set, and predict outcomes for the test set. Eigenvectors of the covariance matrix are actually directions of the axes where there is most variance.PC’s do not have an interpretable meaning, being a linear combination of features.PCA tries to compress as much information as possible in the first PC, the rest in the second, and so on….Derive the new axes by re-orientation of data points according to the principal components.Sort the eigenvectors by decreasing eigenvalues and choose k eigenvectors with the largest eigenvalues, these becoming the principal components.Compute eigenvectors and the corresponding eigenvalues.Scale the data by subtracting the mean and dividing by std.So lastly, we have computed principal components and projected the data points in accordance with the new axes.

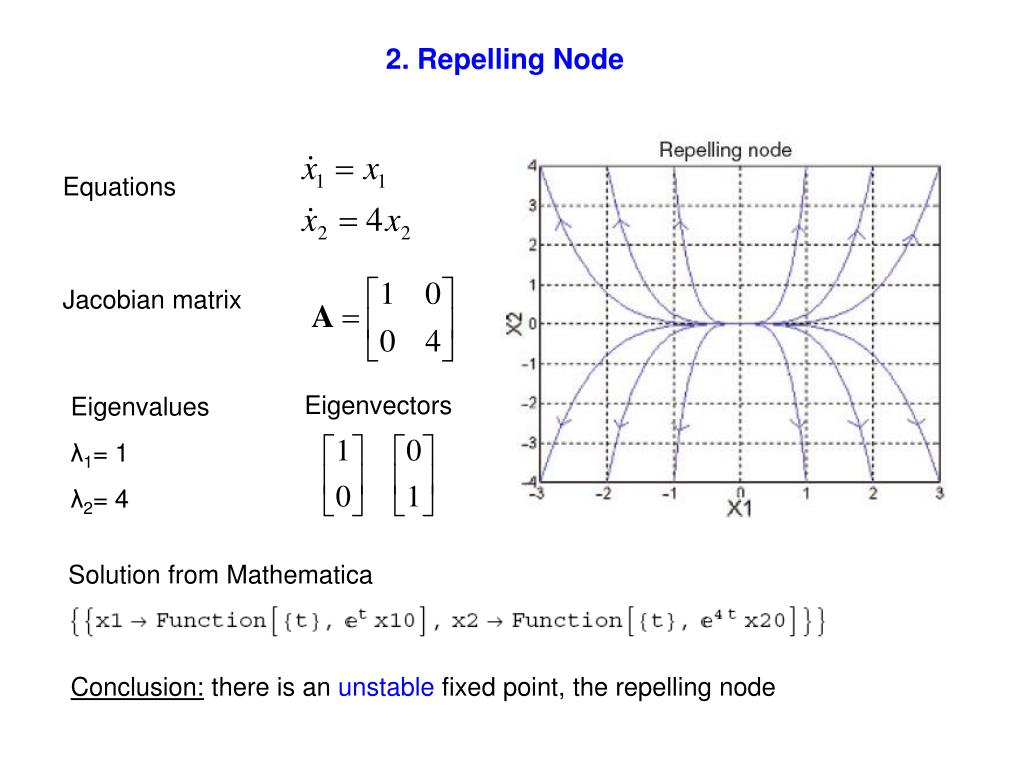

In the last step, we need to transform our samples onto the new subspace by re-orienting data from the original axes to the ones that are now represented by the principal components.įinal Data= Feature-Vector*Transpose(Scaled(Data)) Recasting data along Principal Components’ axes Otherwise, if numeric results are not acceptable, then you will have to manually orthogonalize the individual subspaces.After choosing a few principal components, the new matrix of vectors is created and is called a feature vector. Thus, if numeric results are acceptable, you can simply apply N to your array, and the results will automatically be orthonormal. In contrast, with floating-point results, such performance considerations do not apply, and so by default it orthonormalizes them. As a result, Mathematica does not normalize symbolic eigenvectors because doing so could be catastrophic. Why is Mathematica not smart enough to do this? For exact input, the resulting eigenvectors can be complex numeric expressions, and calculating norms and other such arithmetic used in the Gram-Schmidt procedure becomes frightening from a computation-time perspective. While the documentation does not specifically say that symbolic Hermitian matrices are not necessarily given orthonormal eigenbases, it does sayįor approximate numerical matrices m, the eigenvectors are normalized.įor exact or symbolic matrices m, the eigenvectors are not normalized.įrom this, it's reasonable to guess that if Mathematica isn't smart enough to normalize symbolic results, then it's also probably not smart enough to orthonormalize them, either.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed